Why Training LLMs on Quantum Computers Makes No Sense

The leap from GPU to QPU isn't like CPU to GPU. It's fundamentally different.

Everyone thinks quantum computers will train AI models faster.

They won’t. At least not the way you think.

Here’s why: Training Llama 3.1—Meta’s 405 billion parameter model—takes 4,486 years on a single GPU.

Throw 16,000 GPUs at it?

Three months.

That’s parallel computing. It works. It works really well.

The Problem Everyone Misses

Training AI models comes down to matrix multiplication. Massive grids of numbers multiplying together. For Llama 3.1, that’s 10^25 floating-point operations.

So naturally, people ask: can’t quantum computers just do all that math simultaneously?

It’s the wrong question.

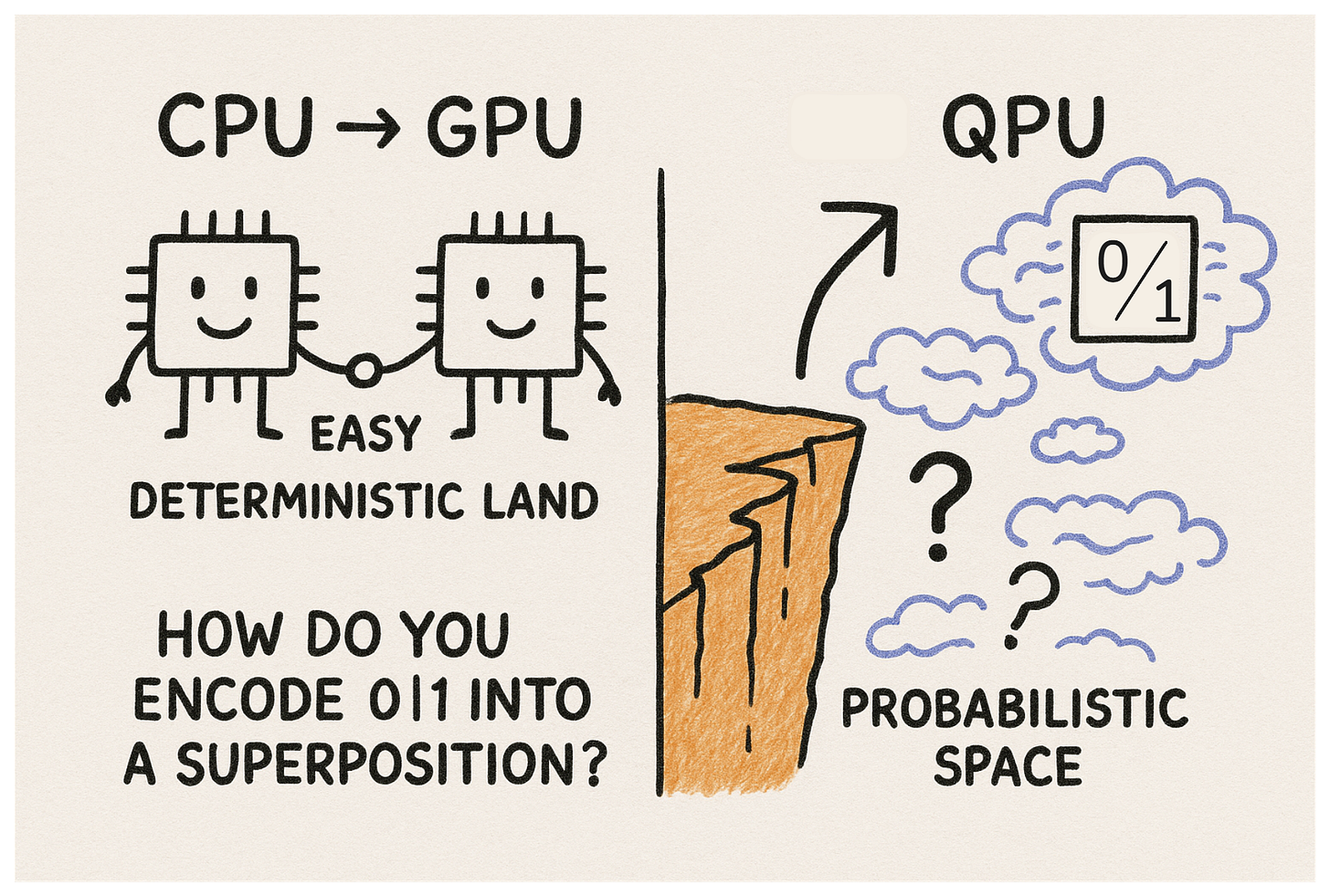

Why GPUs Aren’t CPUs

When we went from CPU to GPU, the transition was seamless. Both are transistor-based. Both process binary information—zeros and ones carried through electrical signals.

Deterministic. Predictable. Compatible.

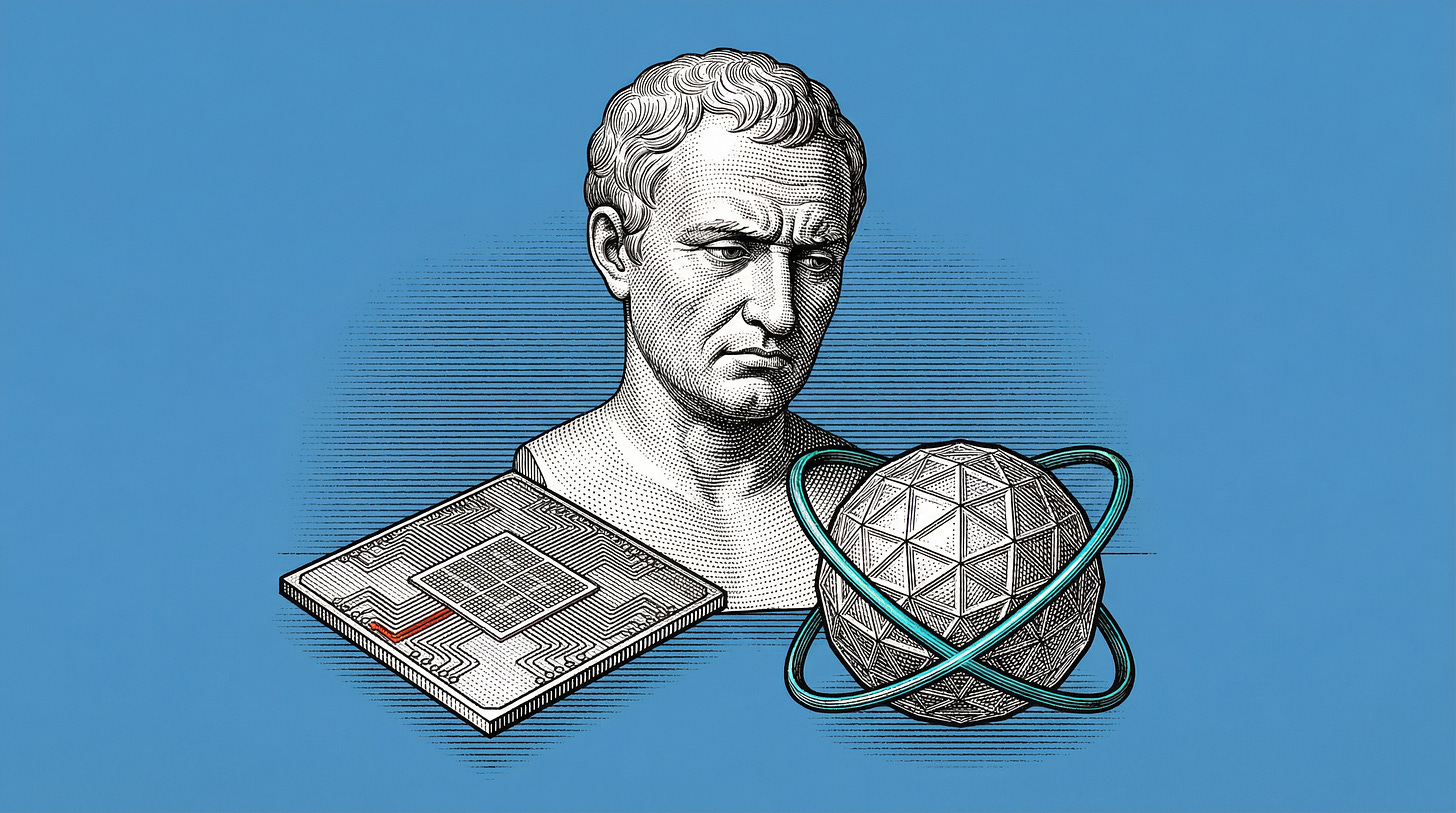

The leap from GPU to QPU is completely different.

CPUs and GPUs store data deterministically: zero or one.

Quantum computers store data probabilistically: zero and one, simultaneously.

To use quantum computers for AI training, you’d need to encode deterministic tokens (stored as zeros and ones) into quantum states (probabilistic superpositions).

But here’s the thing—

If you force quantum computers to think deterministically, why use them at all?

You’re asking them to abandon the very thing that makes them quantum: superposition.

The Real Question

What are quantum computers actually good for in AI?

Not competing with parallel computing for training models. That’s already solved.

Think about CPUs and GPUs. GPUs are clearly better for parallel tasks requiring heavy computation. But we still use CPUs for browsing the internet, Excel spreadsheets, checking email.

We don’t need GPUs for everything.

Same logic applies here:

Do we really need quantum computers to train AI models when parallel computing is getting the job done fine.

The Market Problem

If you want quantum computers to succeed, you need a market.

Markets create incentives.

Incentives drive innovation, investment, and competition.

Right now, the biggest market is AI. Excess capital is flooding the AI industry. Some of that capital could flow toward quantum computing—if quantum computers work synergistically with GPUs rather than trying to replace them.

Jensen Huang, Nvidia’s CEO, said Nvidia will be “a relic of the past,” pointing to quantum computers’ potential to overtake GPUs the same way GPUs overtook CPUs.

But he also sympathized with quantum computing’s harsh reality. When Nvidia competed for funding, most R&D budgets went to CPUs. Nvidia found its market in gaming—a huge opportunity with a low bar.

They leveraged gaming to grow their footprint.

Now Nvidia is the most valuable company in the world. Bigger than all US and Canadian banks combined.

Quantum computing needs its gaming industry. Its low-hanging fruit. Its iPhone moment.

The Scaling Trap

The quantum computing industry has been racing to scale. But scaling here doesn’t mean what you think.

In classical computing, Moore’s Law guided scaling: transistors on chips double every two years.

For quantum computing, Moore’s Law is actually too slow.

Most frontier quantum labs in 2024 have hundreds of qubits. Doubling every two years puts them at less than 10,000 qubits by 2034.

Not good enough.

And here’s the kicker: even with hundreds of qubits available right now, we can’t use them to train AI models.

Why?

Errors.

Errors in quantum computers aren’t bugs. They’re features.

Classical computers can’t tolerate errors. Everything must be deterministic—zero or one.

But qubits are probabilistic and incredibly sensitive. Harnessing the quantum mechanics that power them requires completely different algorithms than classical computing. Different quantum gates to manipulate qubits precisely.

Companies like IonQ, IBM, Rigetti, D-Wave, Nvidia, and Microsoft are all employing different hardware techniques: trapping ions, superconducting circuits, quantum annealing.

Each approach tackles common roadblocks: coherence time, scalability, gate fidelity.

There’s a lot to unpack. Also a lot of potential for early adopters to take risks and innovate.

The Ultimate Question

How will quantum computers actually pair up with AI?

Maybe quantum computers are better off focusing on different industries entirely.

Maybe LLMs will be something we run on GPUs in the future—just like how we run Excel on CPUs now. Niche problems with niche solutions.

Or maybe—

We’ll come up with entirely new ways of harnessing knowledge that we couldn’t even consider with classical computers.

Ways we haven’t imagined yet.

The question isn’t whether quantum computers will revolutionize AI.

It’s whether we’re aiming at the right problem in the first place.

The Ferrari-in-traffic analogy works perfectly. Problem selection matters more than raw capability.

Here's the TL;DR version of this:

• GPUs already solved deterministic parallel computation efficiently.

• Quantum systems operate probabilistically by design.

• Forcing quantum into LLM training wastes its strengths.

• Hardware shifts only matter when paired with the right problems.

• Quantum needs a native use case, not a benchmark race.