What Mickey 17 Gets Right About the Future of Human Labor

What actually breaks when your body is stateless, your mind is a backup, and death is just a redeploy.

What happens when your body becomes as disposable as a crashed Docker container? When death turns into a system reset, and your consciousness gets flashed onto a freshly printed vessel like firmware onto a new device?

Bong Joon-ho’s Mickey 17 is not a sci-fi thought experiment. It reads like a plausible engineering spec for how a collapsing society might ration human life. The story does not treat people as citizens. It treats them as deployable infrastructure. And the scariest part is how little imagination it takes to get there from where you are right now.

The Economics of Expendability

The story starts with debt. Not drama. Not cosmic stakes. Debt.

The film’s 2026 feels uncomfortably close. Widespread insolvency pushes past poverty into full systemic collapse across entire social layers. Earth hits resource limits. Unemployment spikes. And in that vacuum, a new job category appears - the Expendable.

Think of it as the gig economy pushed to its logical endpoint. You do not trade your time or your labor. You trade your body. Your life. You sign a contract. You get printed. You die. You get reprinted. You do it again.

Expendables function as biological telemetry probes. They walk into lethal environments so the protected population does not have to. They gather data from alien ecosystems, sample unknown pathogens for vaccine development, and stress-test terraforming viability before anyone important arrives. They are human test cases running in production, and the production environment will kill them.

If you have ever deployed a canary release - pushing a small slice of traffic through an untested path to see what breaks before you roll it out to everyone - you already understand the Expendable program. The difference is that the canary is a person.

Mind as Software, Body as Hardware

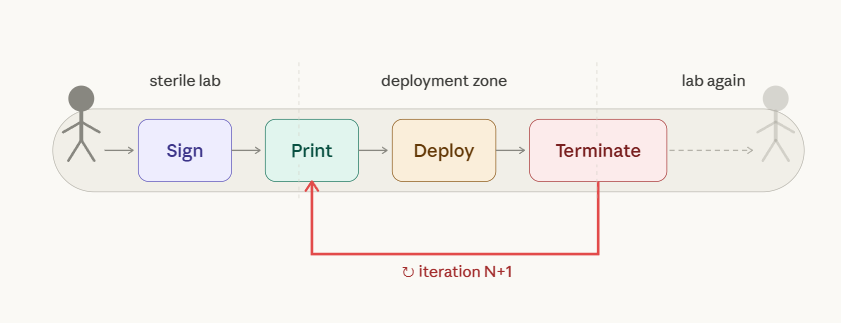

The central breakthrough in Mickey 17 is mind-body decoupling. The film calls them Cortex and Vessel, but if you have spent any time around infrastructure, the mapping is immediate.

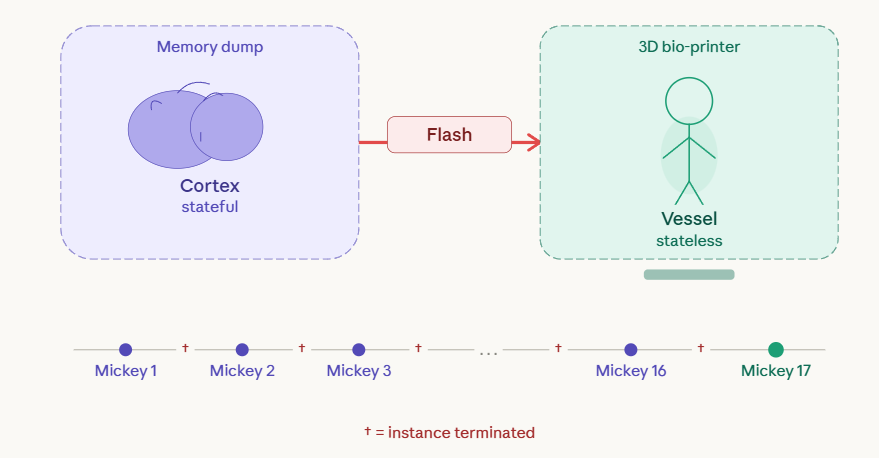

The mind is a Docker image - a digitized brain state carrying memories, personality, and cognitive rules. The body is a container - a 3D-printed, replaceable biological runtime. Death is a terminated instance. You redeploy the same image onto fresh hardware and keep going.

The body becomes stateless and disposable. Identity lives entirely in the saved state. Your continuity depends not on your flesh surviving, but on the fidelity of your memory dump. If the backup is clean, you wake up as yourself. If it is not, you wake up as something else wearing your face.

This should feel familiar. A stable model runs across many deployments while the environment and substrate change underneath it. The person stays consistent across copies, as long as the state stays intact. Lose the state, and you lose the person. The hardware was never what mattered.

The Engineering Problem Nobody Talks About

This entire setup collapses unless you solve two hard engineering problems. The film glosses over both, which is exactly where it gets interesting if you think about it seriously.

Genetic fidelity. Every reprint needs 1:1 DNA replication. Drift across iterations degrades the vessel. Worse, it changes the brain substrate hosting the mind. A slightly different brain running the same cognitive image introduces subtle errors. Over enough reprints, Mickey N+1 is not the same person as Mickey N - not because the software changed, but because the hardware drifted. This is the biological equivalent of running the same application on increasingly divergent chipsets and expecting identical behavior.

Memory injection. The brain dump needs perfect continuity. Zero lost time between the death of Mickey N and the boot of Mickey N+1. Any gap creates discontinuity. Any corruption creates a different person who believes they are the original. And if the memory stream is unprotected - if someone intercepts and edits it - you are not just killing a person. You are rewriting who they wake up as.

That is not identity theft. That is identity hijacking at the firmware level. You cannot patch it after the fact because the person who would notice the tampering is the person who was tampered with.

The Race Condition That Breaks Everything

Here is where the architecture falls apart completely, and where the film finds its real tension.

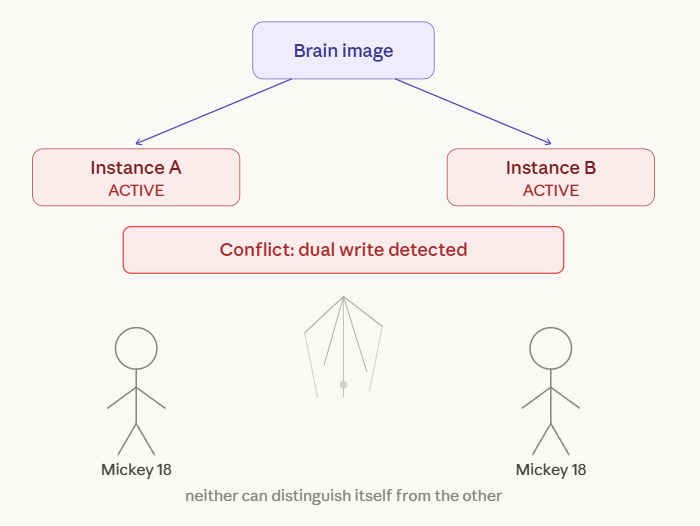

What happens when two bodies get printed from the same brain image at the same time?

You get a race condition.

If you have built distributed systems, you know this failure mode. Two processes share a resource, both assume exclusive access, and neither knows the other exists until they collide. In Mickey 17, the shared resource is an identity. Two Mickey instances boot from the same snapshot, each carrying a full and legitimate claim to being the real continuation.

Which one has authority? Which one counts? The system was designed around single-instance governance - one Mickey at a time, no overlap, no ambiguity. The moment that assumption breaks, the entire design collapses.

A workable model would need hierarchy. A Prime - the original person used for the initial genetic sequence and brain scan - acts as the master node. The reprints are child pods. If the Prime dies, authority fails over to a designated child node through an explicit protocol. Without that protocol, you get identity collision. Two versions of the same person, each with an equal claim, each insisting the other is the copy.

This maps directly to split-brain behavior in distributed databases. Two nodes accept writes simultaneously, diverge from each other, and you cannot merge them back without data loss. In Mickey’s case, the data you lose is a human being.

The Horror of Normalization

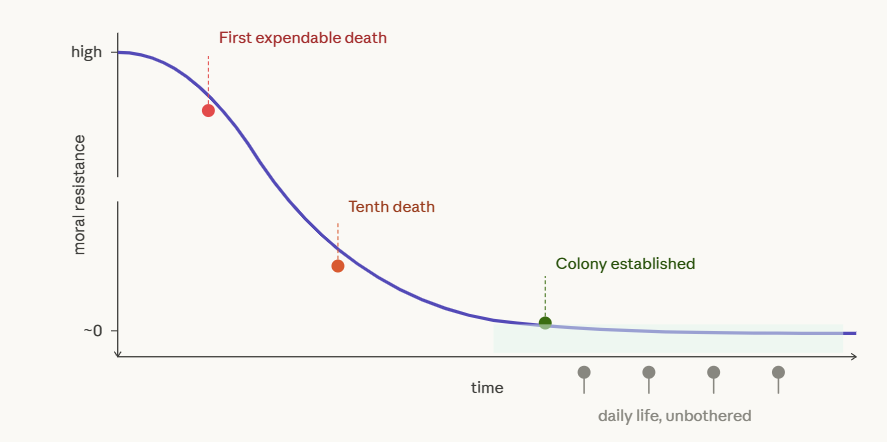

The disturbing part of Mickey 17 is not the technology. It is how fast everyone accepts it.

Nobody in the film is horrified by the Expendable program for long. The ethics get processed, filed away, and overwritten by operational urgency. There is a mission. Resources are scarce. Someone has to die so the colony survives. The system works. So people stop questioning it.

This is the real warning, and it has nothing to do with 3D-printed bodies. You already live in a world that normalizes disposability. Gig workers with no benefits, contract soldiers in privatized wars, content moderators burning out so platforms stay clean. The distance between “acceptable cost of doing business” and “Expendable” is shorter than you think. Mickey 17 just removes the pretense by making the disposability literal.

The film forces questions that do not have clean answers. If you can copy a mind indefinitely, individual sovereignty dissolves - you are not a person, you are a template. If death is reversible, murder becomes an operational inconvenience rather than an irreversible act, and accountability evaporates with it. If humans become disposable, the only question left is who gets to set the disposal policy. And that question is always answered by whoever holds power, not by whoever holds a moral argument.

A society running this system needs governance around duplication, memory ownership, and the right to exist as a single instance. Without those guardrails, human life becomes a resource you allocate, scale, and terminate based on need.

What This Film Actually Gets Right

Mickey 17 warns about a trajectory that is already visible if you know where to look. Efficiency pressure, economic desperation, and technical capability converge into a system built on disposable people. The technology is fictional. The incentive structure is not.

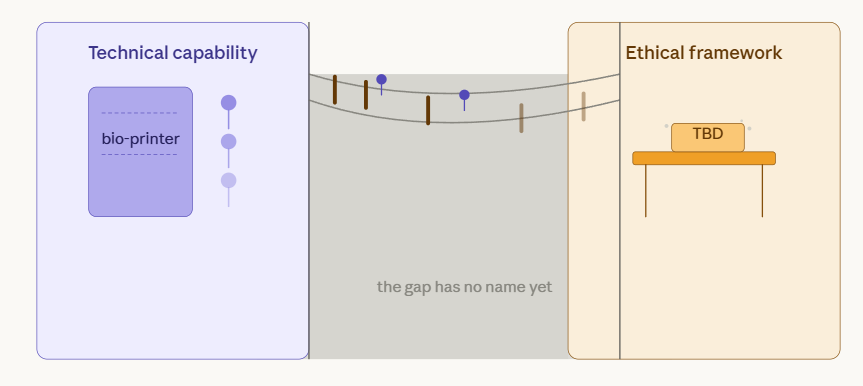

The open question is governance. If mind-body decoupling becomes possible - and pieces of it are already in active research - the technology will not protect you. Rules, enforcement, and power structures will decide whether people remain people or become containers you spin down when the cost-benefit calculation changes.

The film does not answer that question. It does not have to. It just shows you what happens when nobody asks it early enough.

The problems Mickey faces - state persistence, identity integrity, consensus across copies, resource management for on-demand replication - are not hypothetical abstractions. They are the same problems you solve every day in distributed systems, infrastructure design, and platform governance. The difference is stakes. When the system you are designing runs on human lives instead of server instances, every architecture decision becomes an ethical one. And if you wait until production to figure out your failure modes, someone real pays the price.