AI Slop Is YOUR Skill Issue, Stop Blaming the LLMs (+30 Skill Up Scenarios)

Bad Inputs Made Bad Outputs Long Before AI, Here's Your Guide on How to Embrace AI and Dominate Your Field

“Slop” is doing too much work as a word.

It’s getting slapped on everything AI-touched — regardless of craft, intent, or output quality. A LinkedIn post? Slop. A blog? Slop. A cold email that actually converts? Still slop, apparently.

This is intellectually dishonest.

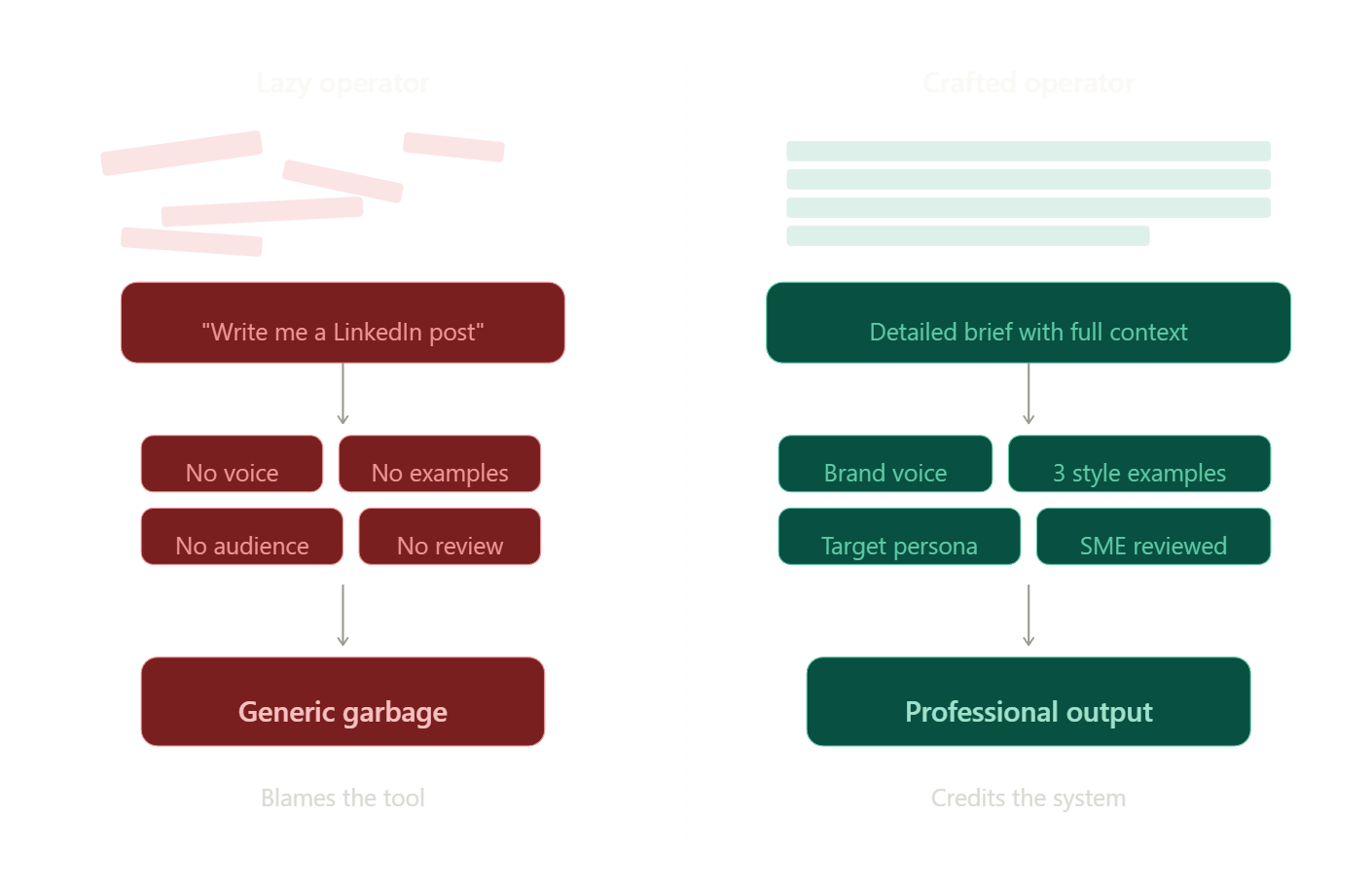

Slop is real. I won’t pretend otherwise. But it comes from lazy inputs, zero review, and no original thought — not the tool. Blaming AI for bad output is like blaming a DSLR for a bad photograph. The camera didn’t compose the shot. You did.

What follows is a 30-scenario breakdown of where the "AI slop" accusation is actually a skills gap in disguise. Same pattern throughout: the wrong way produces garbage; the right way produces professional output. The variable isn't the tool but rather the operator.

If you’ve ever dismissed something as “obviously AI” without examining the process behind it, this is for you.

What Slop Actually Is (And Isn’t)

Let me define the thing clearly so we can stop arguing past each other.

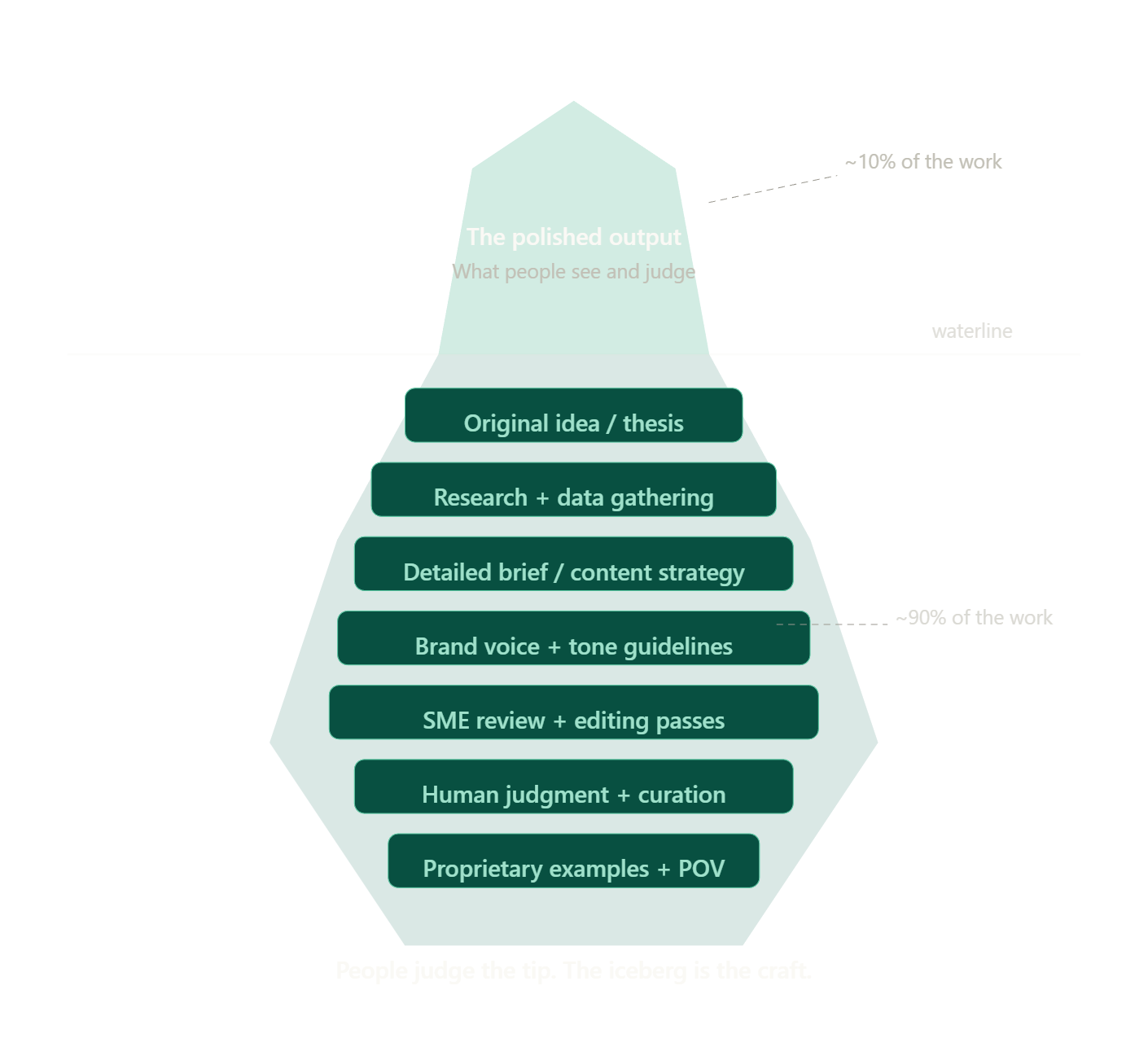

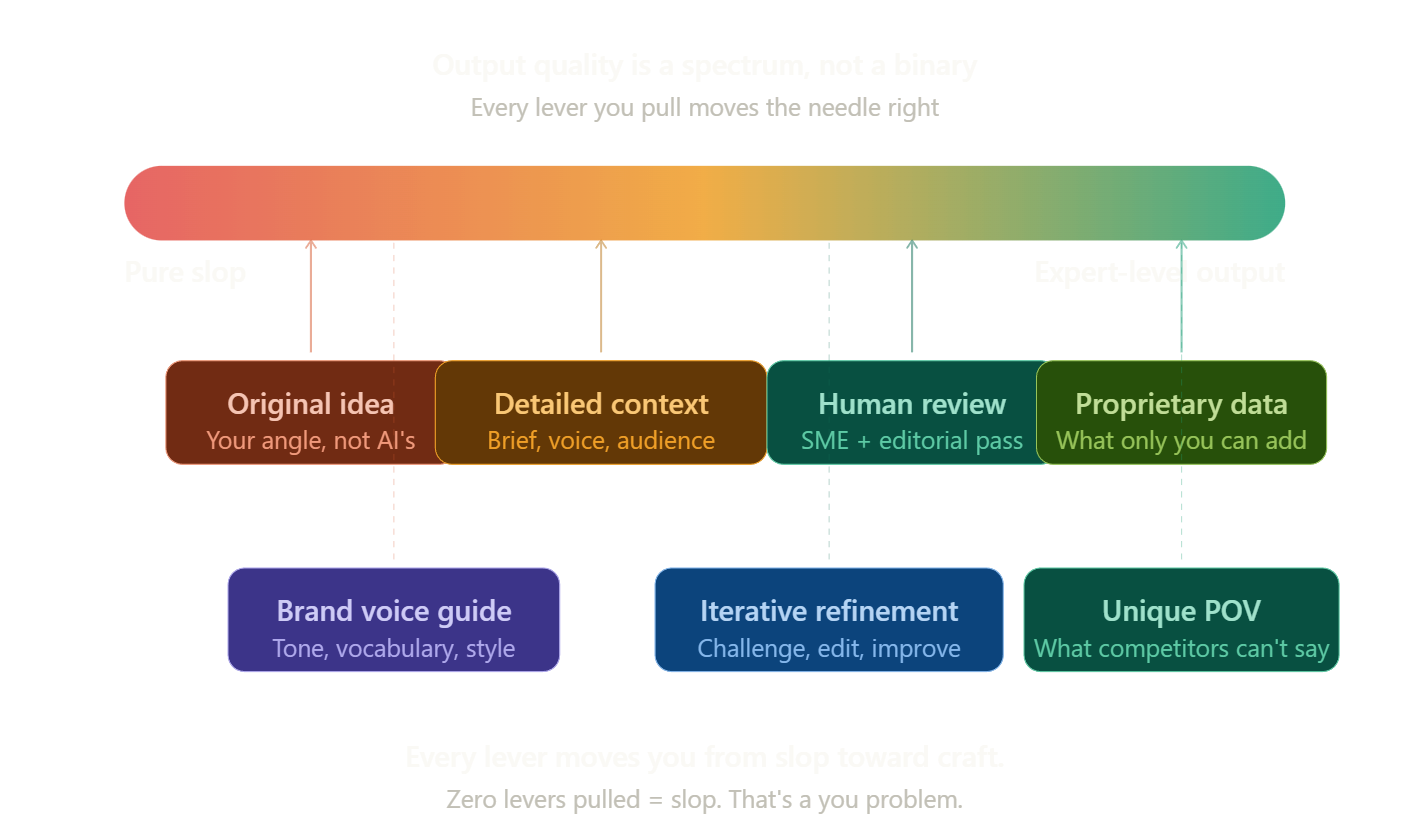

Slop = output where no original thought was added, no context was given, no editing was done, no human judgment was applied, and it was published raw. That’s a people problem.

Not slop = AI used as a collaborator, editor, accelerator, or structural scaffold for original human ideas.

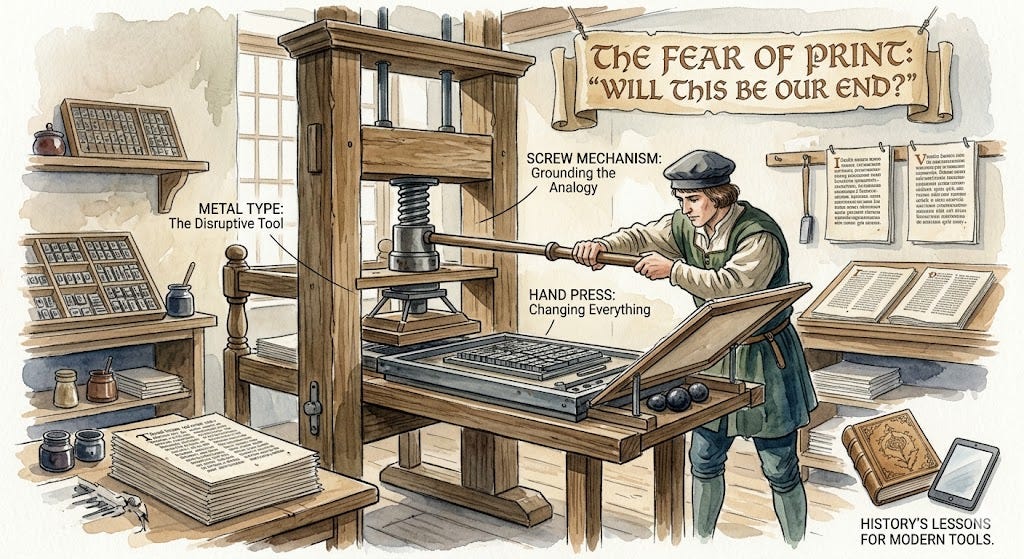

This isn't new. The printing press produced pulp fiction. Cameras were supposed to kill painting. Synthesizers were going to end music. Spreadsheets would make accountants obsolete. Every productivity tool gets this treatment. AI is not special, it’s just the anxiety about it.

Here's what nobody wants to say out loud: bad writers produced bad content before AI existed. AI lowered the floor and raised the ceiling. Your job is to operate at the ceiling.

And the part that really irritates people — nobody audits whether a human LinkedIn post was written drunk at midnight or carefully crafted over three hours. AI gets held to a different standard because it threatens output monopolies, not because the standard is principled.

The 30 Scenarios to Prove That It’s Not the AI That Sucks

I've organized these across seven domains. Each follows the same structure: the objection people make, the wrong way (slop), and the right way (not slop). If you recognize yourself in any wrong-way column, the fix isn't to stop using AI. It's to use it better.

Writing & Content

1. The LinkedIn Post

“That’s clearly AI-written. So generic.”

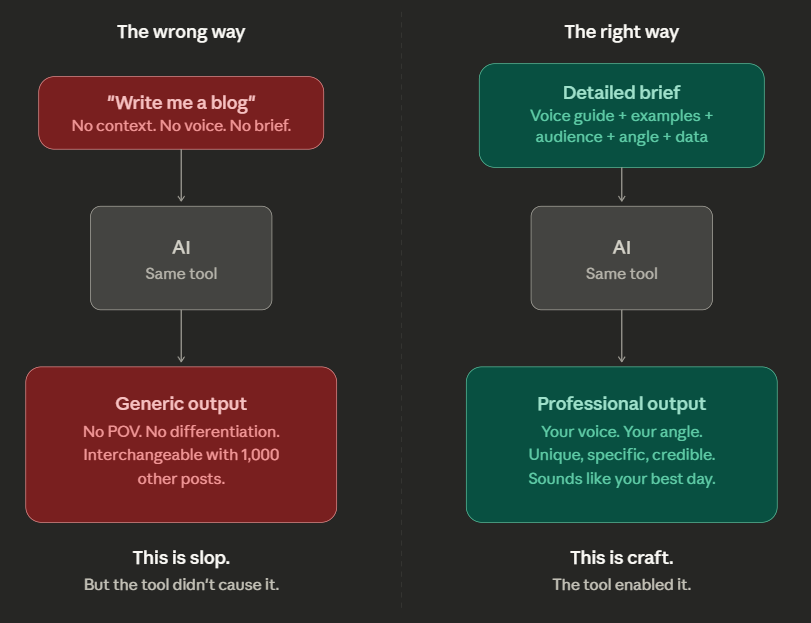

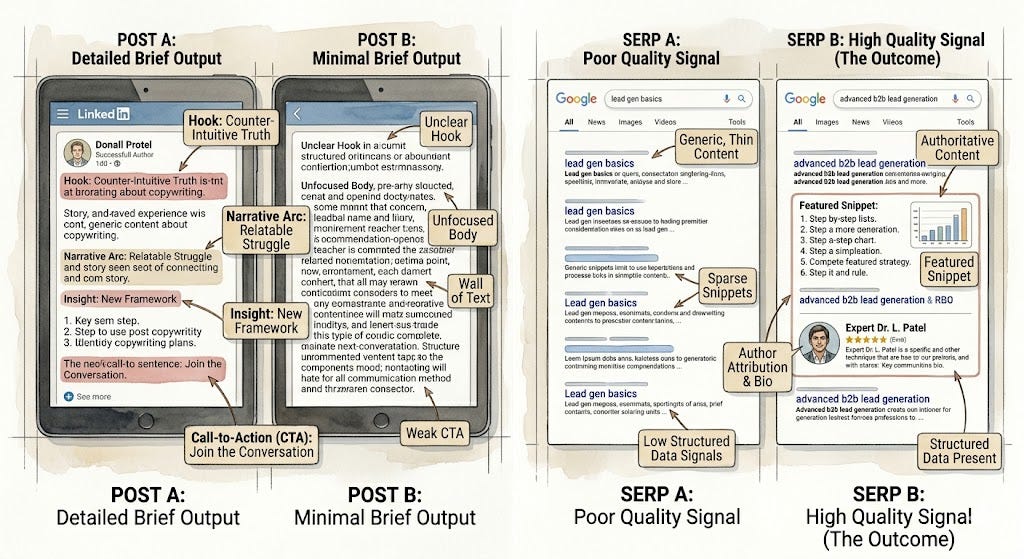

The wrong way: You type “write me a LinkedIn post about leadership.” No context, no voice, no examples. You publish what comes back. It sounds like 10,000 other posts because you gave the AI nothing to differentiate with.

The right way: You feed it your actual story. Your brand tone guide. Three example posts you admire. Explicit dos and don’ts. The specific audience. The one emotion you want to leave them with. The output sounds like you on your best writing day — just faster.

The person calling your post generic gave their AI nothing and expected something. That’s not an AI failure. That’s a brief failure. A human ghostwriter given zero context would produce the same bland result. Context is the product.

2. The Blog Post

“AI blogs are thin, repetitive, and rank for nothing.”

The wrong way: “Write a 1500-word blog on cloud security.” No keyword research. No audience definition. No SERP analysis. Published with zero editing, no internal links, no subject matter expert review.

The right way: Start with keyword intent research and competitor gap analysis. Build a content brief with H2 structure, target persona, and a unique angle — ideally tied to proprietary data or a perspective only your company can credibly own. Then: AI draft → SME review → copyeditor pass → SEO audit → human-added examples and quotes.

HubSpot, Zapier, and NerdWallet built content operations worth hundreds of millions through scaled, systematized content production — AI-assisted before “AI-assisted” was a phrase. The system was the differentiator, not the word count. AI doesn’t write bad blogs. Lazy briefs do.

3. The Cold Email

“I can tell it’s AI. Immediate delete.”

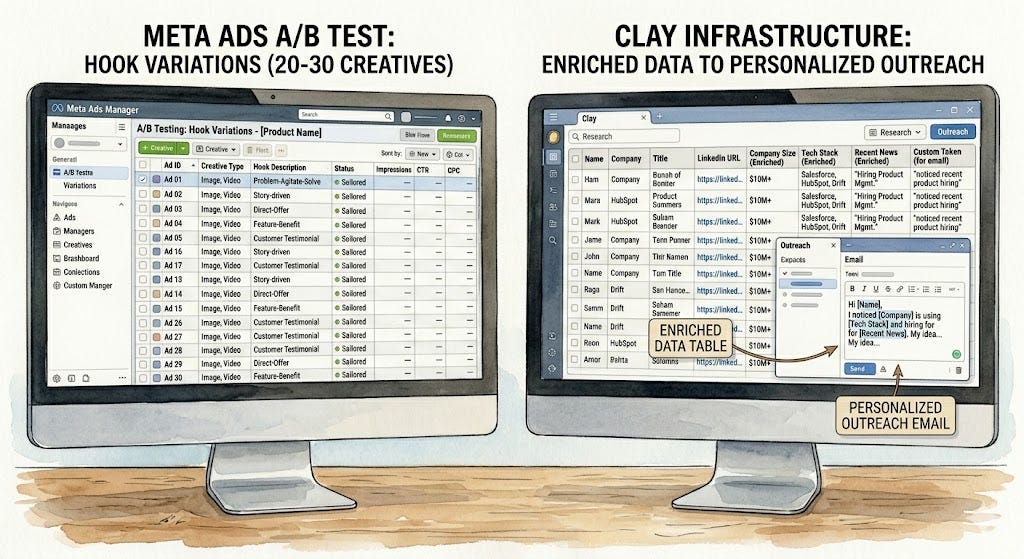

The wrong way: “Write a cold email to sell my SaaS product.” No ICP, no pain point mapping, no offer clarity. Generic opener. One template blasted to 5,000 people.

The right way: Build an ICP profile — company size, role, tech stack, known pain points, recent trigger events like funding rounds or job postings. Prompt with all of it. Specific opener tied to a real signal. Tiered personalization: AI writes the frame, merge fields pull in real data, human reviews top-priority accounts manually.

Companies like Ramp and Rippling use Clay + AI personalization workflows generating reply rates 3-5x above industry average. The “AI email” criticism applies to lazy spray-and-pray. It doesn’t apply to intent-mapped, signal-triggered outreach built on actual research infrastructure. The email isn’t the product — the research is.

4. The Press Release

“AI press releases read like robots wrote them for robots.”

The wrong way: “Write a press release about our Series B.” No quotes, no narrative, no journalist angle. Indistinguishable from the 200 other Series B releases that week.

The right way: Feed it everything — funding amount, lead investor quote, capital allocation plan, market problem being solved, two target publication angles, embargo date, boilerplate. Then find the one surprising angle about the round and structure the entire release around that hook. AI draft → PR strategist review → personalized pitch emails per journalist beat.

A press release is a commodity format. The AI isn’t the problem — the lack of a news angle is. Most human-written press releases are also terrible because the company buried the interesting thing. AI at least surfaces structure faster, freeing time to find the real story.

5. The Newsletter

“Just AI summaries of things I’ve already read.”

The wrong way: Scrape 10 articles, ask AI to summarize them, send. No voice. No spine. No reason to exist.

The right way: Curate with a strong editorial POV. Why these three things this week? What’s the through-line? What does it mean for your specific reader? Your insight, your frameworks, your predictions — AI handles formatting, transitions, and polish. You provide the thesis.

Morning Brew wasn’t AI-generated, but it was systematized content production with a strong editorial voice. Voice + curation + consistency beats “original writing” with no point of view every single time. AI lets a solo operator do what Morning Brew needed 30 people to do.

6. Social Media Caption Batching

“You can tell they just ran their whole month through ChatGPT.”

The wrong way: “Write 30 LinkedIn captions for a cybersecurity company.” Zero brand voice document. Every caption sounds identical because the prompt had no variance.

The right way: Build a brand voice document first — tone attributes, vocabulary to use and avoid, five sample captions you love, posting goals per platform. Batch by content pillar: educational, proof, opinion. Different prompt structures for each. QA pass on every hook: does it stop the scroll? Are CTAs varied? Is there a mix of emotion, logic, and proof?

7. Book Outline / Story Structure

“AI can’t write real fiction. It’s all derivative.”

The wrong way: “Write me a chapter of my novel.” AI writes it. You publish it. Voice disappears. World feels generic.

The right way: Use AI for structural scaffolding — chapter arc, character motivation logic, tension mapping, pacing notes. AI identifies plot holes, proposes B-story threads, challenges character consistency. Human writes every sentence.

Brandon Sanderson uses outlines that rival small novels before writing a single word of prose. That structural rigor is exactly what AI handles well — and it’s the part most writers skip. Using AI for architecture frees you to be exceptional at the sentence level.

8. The Substack Essay

“I can feel it was AI-written. Lacks real thinking.”

The wrong way: Dump a topic into AI. Publish the essay it returns. Safe, obvious points because the prompt was safe and obvious.

The right way: Voice-memo your raw thinking for 10 minutes. Transcribe it. Feed the transcript to AI to extract structure and identify underdeveloped arguments. Challenge the AI: “What’s the contrarian reading of this? What would a smart critic say?” Then answer those challenges yourself. Final pass is always human — is this actually what I believe, said the way I’d say it?

Marketing & Growth

9. Ad Copy

“AI ad copy performs terribly. No emotion.”

The wrong way: “Write a Facebook ad for my SaaS product.” One version tested. Declared a failure.

The right way: Feed it customer interview quotes, top objections from sales calls, the one transformation your product delivers, three competitor hooks to differentiate from. Generate 20-30 hook variants. Test six at a time. Let performance data tell you what’s resonating. Iterate on winners.

Meta’s internal research shows creative volume and variation speed is a primary predictor of ad performance in their algorithm. More variants = more signal = better optimization. AI doesn’t replace emotional insight. It removes the bottleneck to testing it at scale.

10. SEO Content at Scale

“Programmatic AI content is just keyword stuffing dressed up.”

The wrong way: Generate 500 pages. No differentiation. Thin content. Zero editorial review.

The right way: Content briefs with SERP gap analysis. Each page has at least one proprietary element — a data point, a case study, a tool, a framework competitors can’t copy. SME review layer, author attribution, internal linking strategy, schema markup. AI handles the draft. Humans handle the credibility signals.

Zapier’s programmatic SEO — thousands of pages, each targeting a specific integration use case — drove millions in organic traffic before the current AI wave. The critics calling it slop are playing a different game.

11. Email Nurture Sequences

“Automated email sequences feel robotic and impersonal.”

The wrong way: “Write an 8-email welcome sequence for B2B SaaS.” No persona, no stage mapping, no goal per email. Every email tries to sell.

The right way: Map the sequence to buyer journey stages: awareness → education → objection handling → social proof → decision. Write a specific goal and emotional job for each email. Mix value, proof, and ask. Segment by ICP, use case, or acquisition source. AI makes it feasible to build five sequences instead of one.

12. Competitor Analysis / Battlecards

“AI-generated competitive intel is shallow and outdated.”

The wrong way: “Compare us to [competitor]” with no inputs. Static document updated once a year.

The right way: Feed AI their pricing pages, G2/Capterra reviews (positive and negative), recent positioning shifts, sales call transcripts where competitors came up. Living document with quarterly refresh. AI re-synthesizes, human validates with sales team feedback. Focus on positioning gaps and emotional triggers in negative reviews — not surface features.

Design & Creative

13. AI-Generated Images

“AI images look fake, uncanny, and soulless.”

The wrong way: “Generate an image of a businessman shaking hands.” Use the first output. Publish it.

The right way: Develop a prompt engineering practice — reference art direction (mood, lighting, composition, style), specific negative prompts, iterative refinement. Treat generation like a photoshoot: 20+ variants, select the best, composite if needed, apply post-processing for brand consistency. Build a prompt library with locked brand aesthetic parameters.

The gap between a bad AI image and a great one is entirely in the prompting and curation — which is art direction. Comparing expert photography to a first-draft prompt is not a fair test.

14. AI Video

“AI video is uncanny valley. Nobody wants to watch a fake spokesperson.”

The wrong way: Default avatar, generic script, no brand customization.

The right way: Custom-trained avatar on real spokesperson footage, professional script, mixed with real B-roll and motion graphics. Use it specifically for product tutorials, FAQ responses, localized versions — high-volume, low-touch content. Reserve human talent for flagship content.

15. Presentation Design

“AI decks look templated and lifeless.”

The wrong way: “Make me a 20-slide deck about our product.” Accept the default structure. Bullet points everywhere.

The right way: Use AI for narrative structure, slide-level argument mapping, copy refinement. Use a designer for the visual layer. Challenge defaults — ask AI what the three strongest counterarguments to your thesis are, then build slides that preemptively answer them. Rewrite bullet-heavy slides into single declarative statements. That’s better design thinking, not just aesthetics.

Coding & Engineering

16. AI-Assisted Code

“AI-written code introduces bugs and security vulnerabilities.”

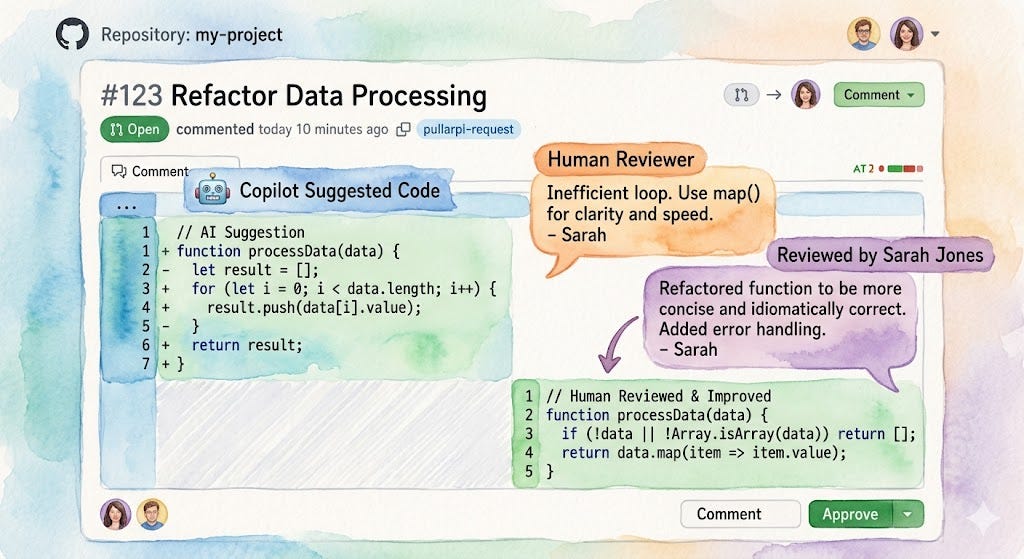

The wrong way: Accept every Copilot suggestion without reading it. Use AI for complex business logic without test coverage. No security audit.

The right way: Treat AI like a junior developer. Review every PR. Understand what it did and why. Reject suggestions that don’t fit the architecture. AI is best for boilerplate and routine patterns. Complex domain logic needs human authorship and AI review — not the reverse. Standard security review applies regardless of who (or what) wrote the code.

GitHub’s own data showed Copilot users completed coding tasks 55% faster. Engineers calling AI code “inherently bad” are conflating “code I didn’t write” with “code nobody reviewed.” The review process was always the safety mechanism. AI didn’t change that.

17. Boilerplate and Scaffold Generation

“AI-generated boilerplate introduces inconsistencies across the codebase.”

The wrong way: Generate scaffold for each module independently. No review pass.

The right way: Build a company-specific prompt template that enforces naming conventions, error handling patterns, folder structure, and coding standards. Architect reviews all generated scaffolds before they touch main. AI reduces the blank-page problem, not the judgment problem.

18. Test Case Generation

“AI-generated tests don’t test what actually matters.”

The wrong way: “Generate unit tests for this function.” Happy-path tests only. Generated once. Forgotten.

The right way: Prompt specifically for edge cases, boundary conditions, known failure modes, integration behavior. Then add the cases AI missed based on domain knowledge. AI-generated tests are a floor, not a ceiling — they cover the obvious so humans can focus on the subtle.

Operations & Automation

19. SOPs and Process Documentation

“AI-written SOPs are generic and don’t reflect how we actually work.”

The wrong way: “Write an SOP for customer onboarding.” No company context. No workflow specifics. Static PDF. Never updated.

The right way: Voice-memo the actual process as you do it. Transcribe. Feed to AI for structure. Team review for gaps. Build a lightweight system with a quarterly AI-assisted review prompt: “What has changed in this process since this was last updated?”

20. Meeting Summaries

“AI meeting notes miss context and nuance.”

The wrong way: Auto-publish the transcript summary without review. Treat all meeting types the same.

The right way: AI summary as a starting draft. Human who attended edits for decisions vs. discussions, unresolved tensions, action item owners and deadlines. Standup summaries? Fully automated. Strategic planning sessions? Human synthesis of subtext and disagreement. Action items formatted with owner + due date + follow-up trigger.

HR & People Ops

21. Job Descriptions

“AI job descriptions all sound the same.”

The wrong way: “Write a JD for a Senior Product Manager.” Generic competency language. No bias check.

The right way: Feed it the team structure, the three most important problems this person solves in year one, what failure and success look like, actual culture attributes. Then ask AI to rewrite the JD from the candidate’s POV: “Why would the best person for this role be excited?” Use AI to flag gendered language, credential inflation, and culture-fit language that screens out good candidates.

22. Performance Review Frameworks

“AI-generated review templates are impersonal and lead to copy-paste feedback.”

The wrong way: “Write a performance review for an engineer.” Same template for all roles.

The right way: Feed AI the employee’s role, stated goals, manager’s observations, specific examples. Role-specific rubrics — IC vs. manager vs. director have fundamentally different success criteria. Use AI to help managers write more specific, behavior-based feedback. Not to replace the manager’s assessment.

23. Interview Question Banks

“AI interview questions are textbook and easily googled.”

The wrong way: “Give me 20 interview questions for a marketing manager.” Same bank for every candidate.

The right way: Map questions to specific competencies. Mix behavioral, situational, and technical formats. Use AI to generate candidate-specific follow-ups based on resume and portfolio. Design questions that reveal thinking process, not just answers. AI helps build the rubric for strong vs. weak responses.

Legal & Compliance

24. Contract Templates

“AI-generated contracts miss nuance and create legal liability.”

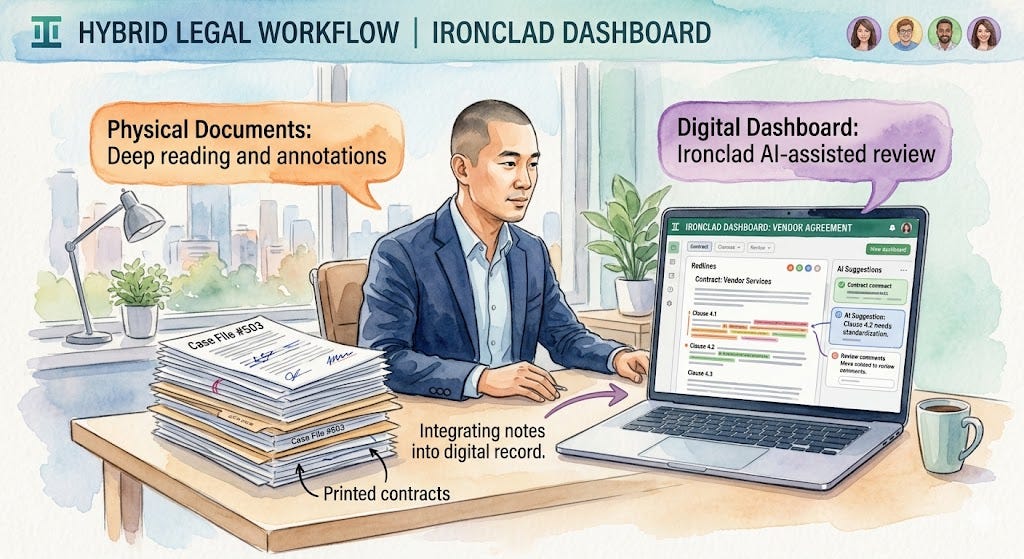

The wrong way: Use AI contract output and send without legal review.

The right way: AI produces first draft with standard clauses. Legal counsel reviews for jurisdiction-specific requirements, liability caps, indemnification nuance. AI flags high-risk clauses for the specific deal type. Lawyer still exercises judgment on risk, strategy, and negotiation.

Legal AI tools like Harvey and Ironclad are used by AmLaw 100 firms — not to replace lawyers, but to handle the 60% of legal work that is pattern-matching, freeing time for the 40% that requires real judgment. A well-prompted legal AI reviewed by counsel is not slop. It’s leverage.

25. Compliance Documentation

“AI compliance docs don’t keep up with regulatory changes.”

The wrong way: Generate compliance policy once. Never update.

The right way: Build a review workflow — AI monitors regulatory updates, flags relevant changes, drafts proposed policy amendments for compliance officer review. Map compliance docs to actual internal processes. Segment by jurisdiction, product type, customer type. AI makes maintaining multiple variants feasible where it previously wasn’t.

Science & Research

26. Literature Review Synthesis

“AI-synthesized research misses nuance and misrepresents findings.”

The wrong way: “Summarize the research on CRISPR gene therapy.” Publish the AI output as your review.

The right way: Use AI to identify the most-cited papers, extract methodology and conclusions, flag conflicting findings. Then the researcher reads primary sources and applies domain judgment. AI synthesis is a map — it tells you where to focus your reading, not what to conclude. Every claim traces to a source the researcher actually read.

27. Grant Proposal Drafting

“AI grant proposals read like they were written by someone who doesn’t understand the science.”

The wrong way: “Write a NIH grant proposal for my research on neuroplasticity.”

The right way: Researcher provides specific aims, preliminary data summary, methodology notes, target study section priorities, funded proposals for style reference. AI structures the narrative arc and strengthens the significance sections. Researcher writes the technical content. Standard PI review. AI accelerates drafting from weeks to days, not from quality to no quality.

Education & Personal Productivity

28. AI Tutoring

“AI tutors give wrong answers and students stop thinking critically.”

The wrong way: Student asks AI for answers. Copies them.

The right way: Student uses AI to explain concepts at their level, ask follow-ups, test understanding through dialogue. AI makes the Socratic method available at scale. Students taught to verify against textbooks and primary sources — critical thinking as a meta-skill, not a replacement.

29. Language Translation

“AI translations are technically correct but culturally tone-deaf.”

The wrong way: Translate marketing copy directly. No localization brief. One-pass, no native review.

The right way: Brief includes cultural context, local idioms to avoid, brand voice in target language, regional nuances. AI translation → native speaker review for cultural appropriateness and naturalness. Segment by content type: legal docs get accuracy-first AI + human legal review. Marketing gets native creative review.

30. Personal Writing

“If AI touched it, it’s not authentically yours.”

Wrong way: Ask AI to write your personal essay from scratch. Accept all edits without reading.

Right way: Write your full rough draft first. Then bring AI in for structural feedback, paragraph tightening, clarity improvements. Treat edits like notes from a trusted editor — accept what rings true, reject what doesn’t sound like you. Explicitly instruct: “Do not change my sentence rhythm. Do not standardize my vocabulary. Only fix clarity and grammar.”

Every professional writer has had a developmental editor, a copy editor, a publisher’s team. That’s multiple humans touching your “authentic” work before it reaches a reader. The authenticity was never in the isolation. It was in the originality of the thought.

The Slop Test

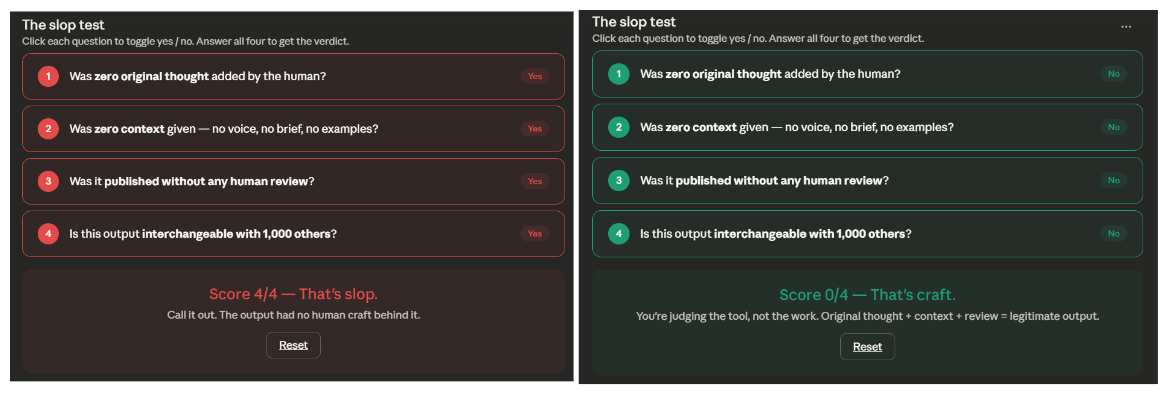

Before calling anything slop, run it through four questions:

1. Was zero original thought added by the human? Or was the idea, the angle, the insight, the experience theirs?

2. Was zero context given? Or was there a brand voice, a persona, a brief, examples, guardrails?

3. Was it published without any human review? Or did a skilled human apply judgment before it went out?

4. Is this output interchangeable with 1,000 other outputs? Or does it have a POV, a specific audience, a unique element that only this person or company can credibly provide?

Score 4/4? That’s actual slop. Call it out.

Score 0-2? You’re judging the tool, not the work.

The Meta-Argument

Here’s where I’ll lose some people, and I’m fine with that.

The people loudest about AI slop are often not producing anything themselves. “Nothing” is not a higher quality bar than “AI-assisted something.” Silence is not craftsmanship. It’s just silence.

Ideas are the scarce resource. Not words. AI makes the expression of ideas faster. If your original idea is interesting, AI-polished language doesn’t dilute it — it amplifies it.

The infrastructure analogy settles this for me: nobody asks whether a building was designed with CAD or hand-drawn blueprints. They ask if it works. If it’s beautiful. If it serves the people inside it. Judge the output on what it delivers to the person receiving it.

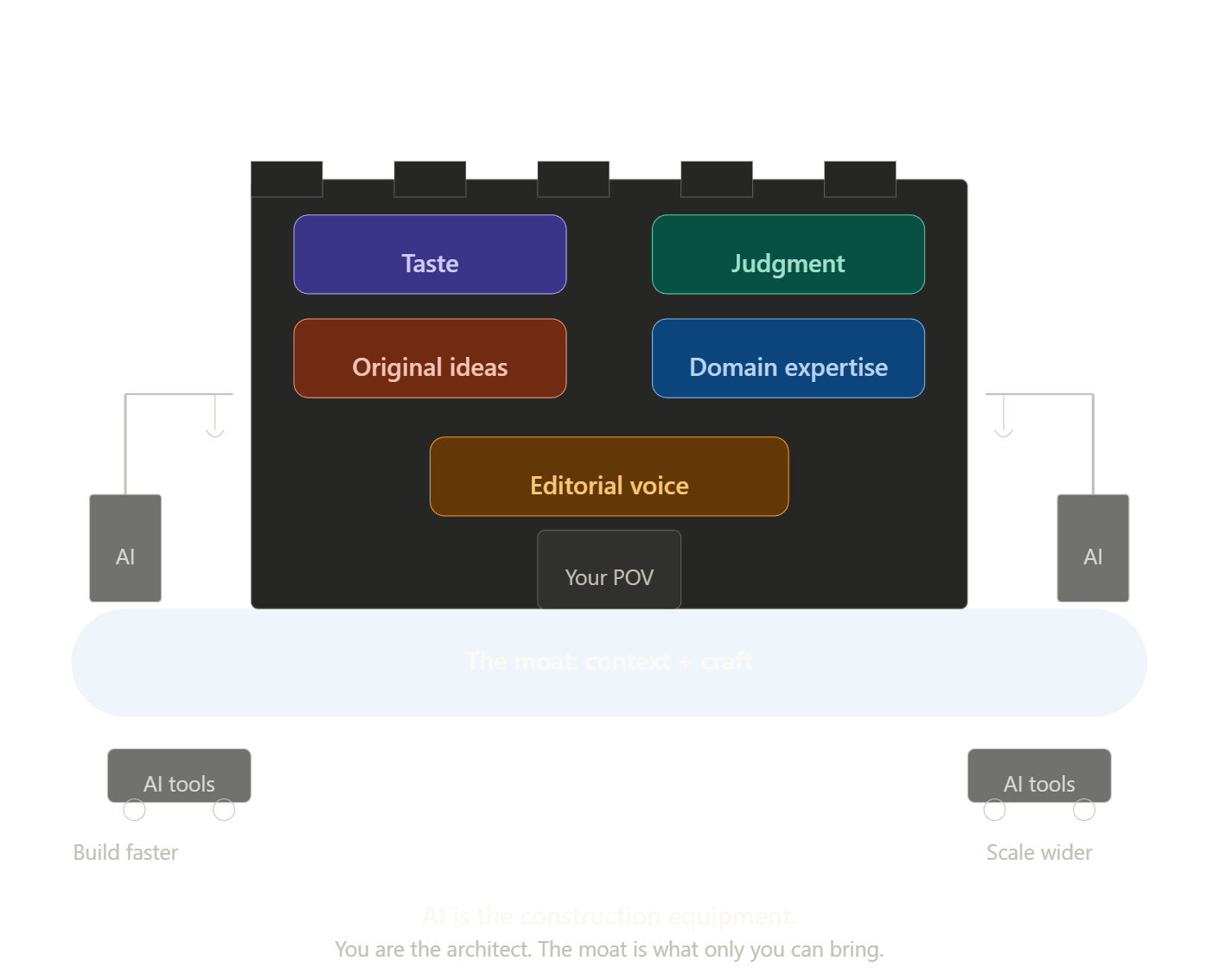

We’re entering an era where a solo operator can produce at the output level of a small team. That’s not a threat to quality. It’s a redistribution of creative leverage toward people with taste, judgment, and original ideas — and away from people whose only competitive advantage was the capacity to produce volume manually.

The bar has never been: was this made without assistance?

The bar has always been: is this good? Does it serve its purpose? Is there a real thought behind it?

The Bottom Line

Slop is a skill issue dressed up as a moral stance.

The problem was never AI. The problem was always people with nothing to say, saying it loudly.

A bad carpenter blames the tools. A bad prompter blames AI. Same thing.

I’d take 100 AI-polished original ideas over 10 “pure” pieces that took three weeks and said nothing new. Every time.

The people who figure this out first will build things the rest of the market spends years trying to catch up to. The people who don’t will write long posts about authenticity while producing nothing.

The tool doesn’t care about your opinion of it. It just works for whoever shows up with something worth saying.

If this changed how you think about AI and output quality, share it with someone still stuck in the “slop” binary. The conversation needs more nuance and less posturing.